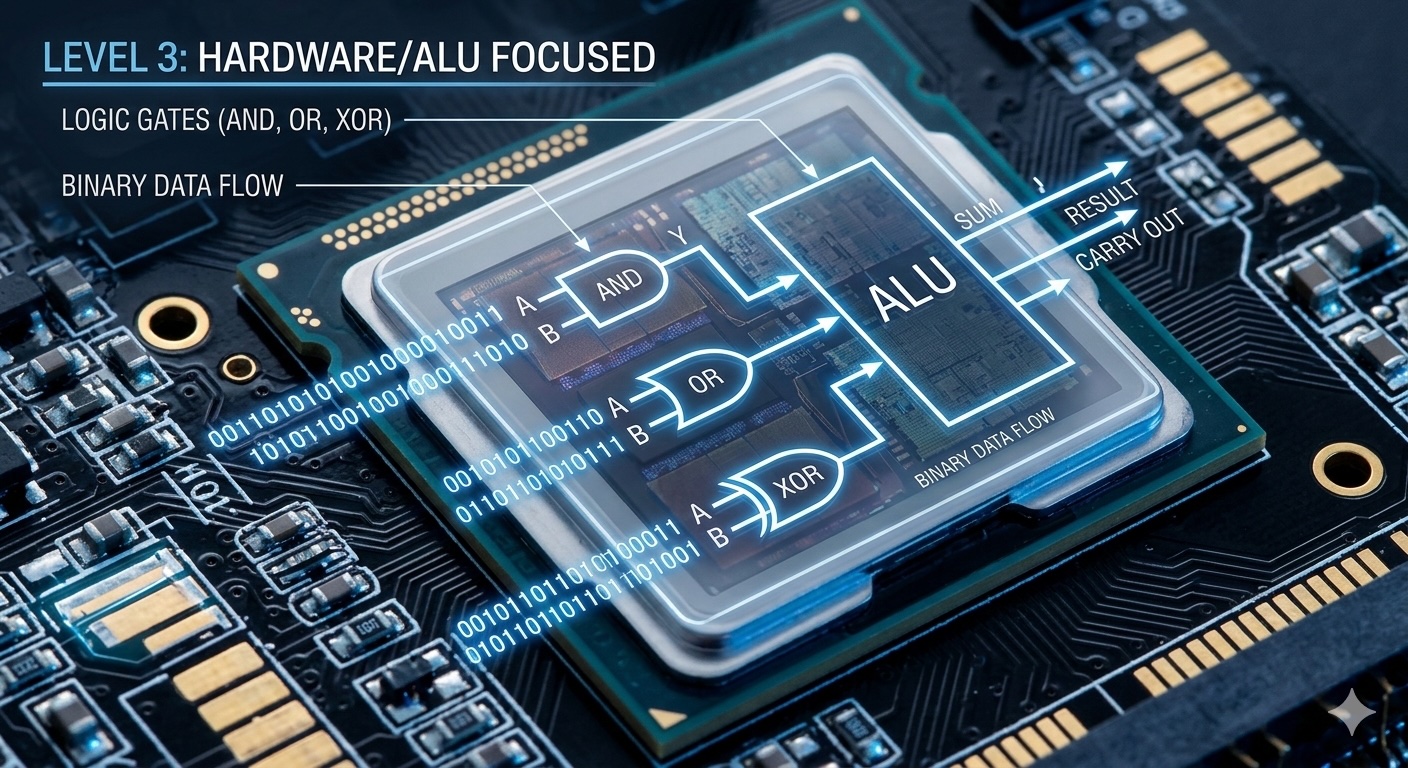

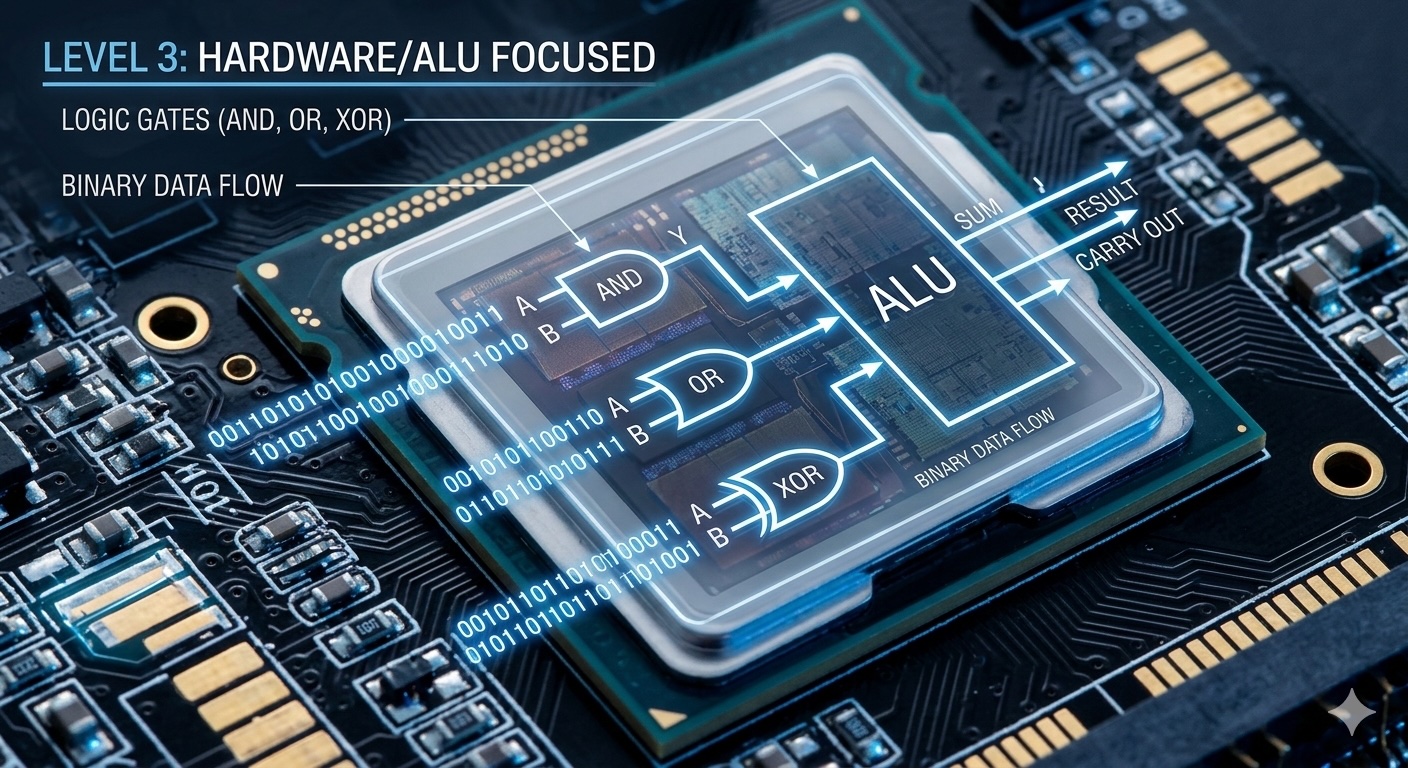

Understanding Binary Arithmetic — Level 3: Hardware & Optimization

Dive deep into hardware-level binary operations. Learn how CPUs perform addition, understand bitwise manipulation, and explore optimization strategies.

Dive deep into hardware-level binary operations. Learn how CPUs perform addition, understand bitwise manipulation, and explore optimization strategies.

Author

Mr. Oz

Date

Read

12 mins

Level 3

We've seen how to implement binary addition in software. Now let's understand what happens at the hardware level. How does a CPU actually add two numbers? What are the primitive operations that make all computation possible?

The Arithmetic Logic Unit (ALU) is the part of the CPU that performs mathematical operations. At its heart, it's a collection of logic gates that implement binary arithmetic.

All binary operations reduce to three fundamental gates:

AND: 0&0=0 0&1=0 1&0=0 1&1=1

OR: 0|0=0 0|1=1 1|0=1 1|1=1

XOR: 0^0=0 0^1=1 1^0=1 1^1=0

NOT: ~0=1 ~1=0

A half adder adds two single bits and produces a sum and carry:

A | B | Sum | Carry

--+---+-----+------

0 | 0 | 0 | 0

0 | 1 | 1 | 0

1 | 0 | 1 | 0

1 | 1 | 0 | 1

Two gates, two inputs, two outputs. This is the atomic unit of addition!

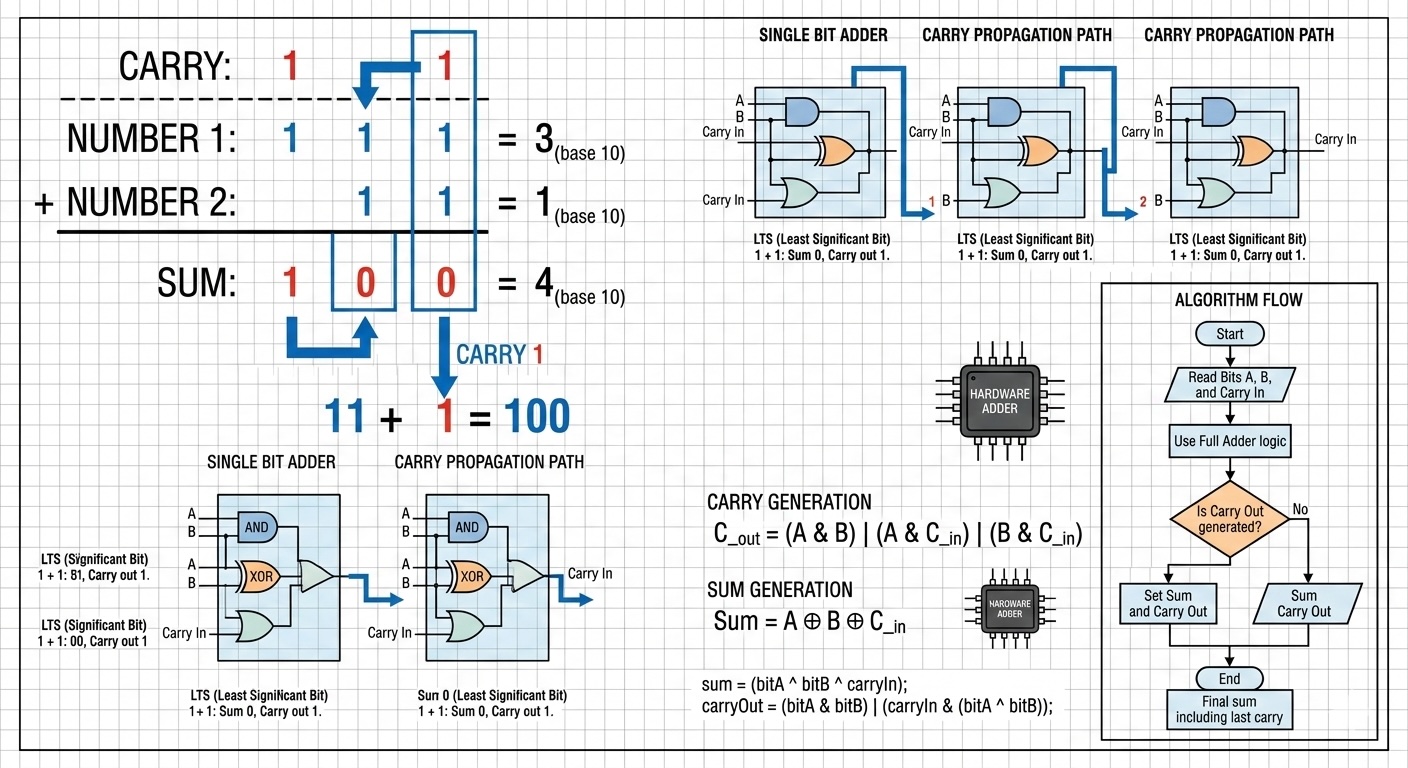

A full adder adds two bits AND a carry-in from the previous position:

A | B | Cin | Sum | Cout

--+---+-----+-----+------

0 | 0 | 0 | 0 | 0

0 | 0 | 1 | 1 | 0

0 | 1 | 0 | 1 | 0

0 | 1 | 1 | 0 | 1

1 | 0 | 0 | 1 | 0

1 | 0 | 1 | 0 | 1

1 | 1 | 0 | 0 | 1

1 | 1 | 1 | 1 | 1

Notice: when all three are 1, sum is 1 and carry is 1. That's 1+1+1=3, which is binary 11!

To add multi-bit numbers, we chain full adders together. Each adder's carry-out connects to the next adder's carry-in:

A[3] B[3] A[2] B[2] A[1] B[1] A[0] B[0]

↓ ↓ ↓ ↓ ↓ ↓ ↓ ↓

[Full Adder] [Full Adder] [Full Adder] [Half Adder]

↓ ↓ ↓ ↓ ↓ ↓ ↓ ↓

S[3] ← Cout S[2] ← Cout S[1] ← Cout S[0] (no Cin)

The problem: The carry must "ripple" through all stages. In the worst case (adding 111...1 + 1), the carry propagates through every adder. This is slow for large numbers!

Programming languages expose these hardware operations through bitwise operators:

// Add without + operator (demonstrates hardware principle)

int add(int a, int b) {

while (b != 0) {

int sum = a ^ b; // XOR: add without carry

int carry = (a & b) << 1; // AND + shift: find and move carry

a = sum;

b = carry;

}

return a;

}How it works:

a ^ b gives the sum ignoring carries (0+0=0, 0+1=1, 1+0=1, 1+1=0)a & b finds where both are 1 (where we need to carry)<< 1 shifts the carry to the next positionModern CPUs use carry lookahead to compute carries in parallel rather than waiting for them to ripple:

With these signals, we can compute whether position i will have a carry without waiting for previous positions to compute theirs. This reduces addition time from O(n) to O(log n) gate delays.

Expert insight: Intel and AMD CPUs use hybrid approaches: carry lookahead for most bits, with carry select for the final stages. This achieves single-cycle addition for 64-bit numbers.

bool isEven = (n & 1) == 0; // Check least significant bitint doubled = n << 1; // Multiply by 2

int halved = n >> 1; // Divide by 2 (integer)

int times8 = n << 3; // Multiply by 8a ^= b; // a = a XOR b

b ^= a; // b = b XOR (a XOR b) = original a

a ^= b; // a = (a XOR b) XOR original a = original b// Brian Kernighan's algorithm

int countBits(int n) {

int count = 0;

while (n) {

n &= (n - 1); // Clear the least significant set bit

count++;

}

return count;

}Computers represent negative numbers using two's complement:

Example: -5 in 8-bit two's complement

5 = 00000101

~5 = 11111010 (flip bits)

-5 = 11111011 (add 1)

This representation makes subtraction identical to addition: a - b = a + (-b). The same adder circuit works for both!

When adding signed numbers, overflow occurs if:

// Detect overflow in addition

bool overflow = (a > 0 && b > 0 && sum < 0) ||

(a < 0 && b < 0 && sum > 0);Modern CPUs have an overflow flag in the status register that's automatically set.

Addition is one of the fastest CPU operations:

| Operation | Typical Latency | Throughput |

|---|---|---|

| Integer Add (64-bit) | 1 cycle | 4 per cycle |

| Integer Multiply | 3-4 cycles | 1 per cycle |

| Integer Divide | 10-60 cycles | 1 per 10-60 cycles |

| Bitwise AND/OR/XOR | 1 cycle | 4 per cycle |

Addition and bitwise operations are essentially free compared to multiplication and division!

Modern CPUs support SIMD (Single Instruction, Multiple Data) operations that add multiple numbers simultaneously:

// AVX2: Add eight 32-bit integers in one instruction

__m256i a = _mm256_loadu_si256((__m256i*)array_a);

__m256i b = _mm256_loadu_si256((__m256i*)array_b);

__m256i sum = _mm256_add_epi32(a, b); // 8 additions at once!

_mm256_storeu_si256((__m256i*)result, sum);This is why modern computers are so fast at operations like image processing — they perform 8, 16, or even 32 additions per clock cycle!

Level 1

Learn the fundamentals of binary arithmetic through an engaging light switch analogy.

Author

Mr. Oz

Duration

5 mins

Level 2

Implementation details, string-based binary addition, and common algorithmic patterns.

Author

Mr. Oz

Duration

8 mins

Level 3

Hardware-level operations, bitwise manipulation, and CPU ALU design.

Author

Mr. Oz

Duration

12 mins