Understanding Tree Serialization: Advanced Optimization

Compression techniques, binary serialization formats, real-world production use cases, and performance benchmarks for tree serialization.

Compression techniques, binary serialization formats, real-world production use cases, and performance benchmarks for tree serialization.

Author

Mr. Oz

Date

Read

12 mins

Level 3

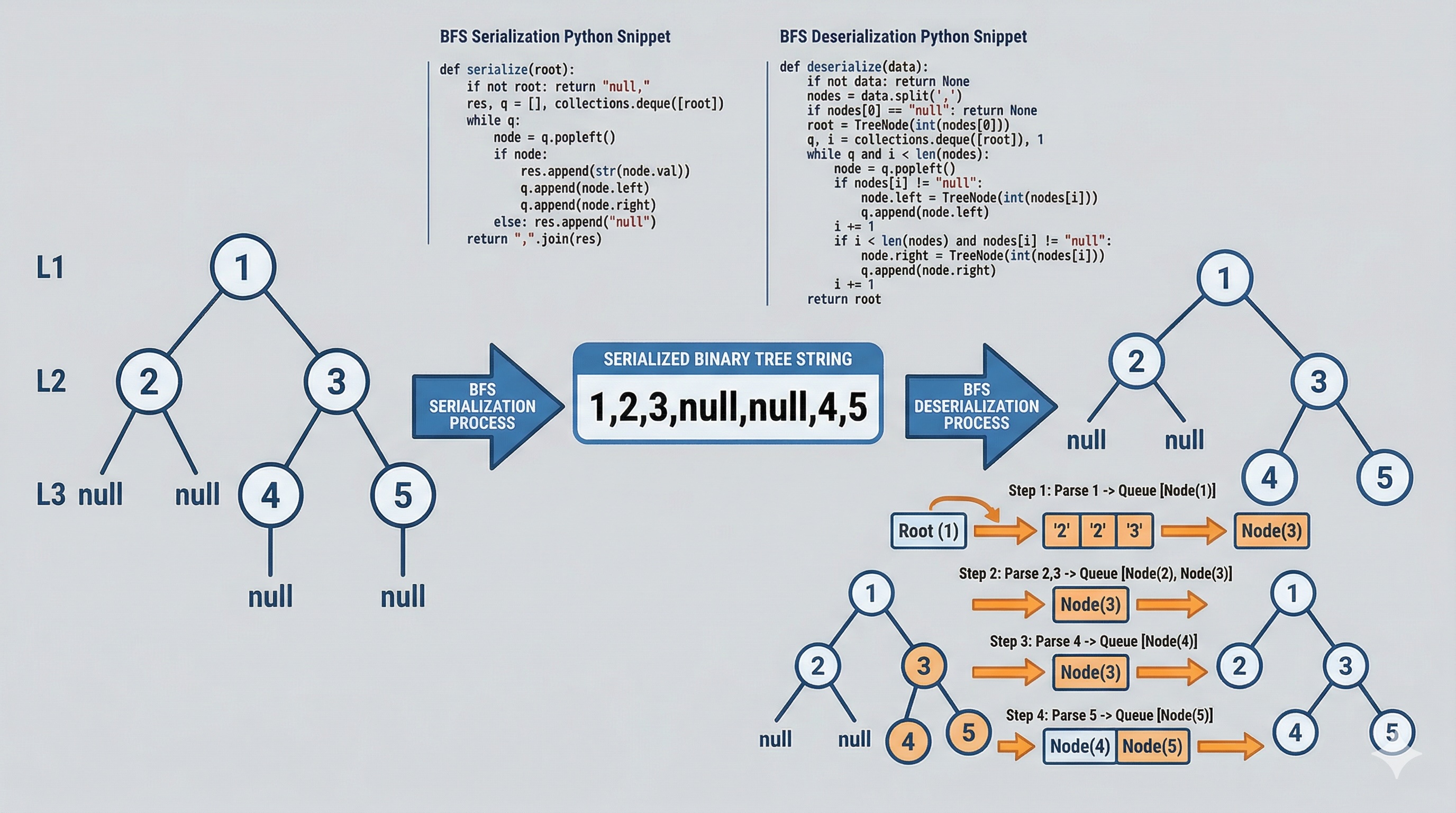

In Level 2, we implemented serialization using text-based formats with BFS and DFS approaches. Now we enter the territory that separates interview solutions from production systems — memory layout optimization, compression, and binary formats.

When you serialize a tree with 10 million nodes, the difference between a naive implementation and an optimized one isn't just milliseconds — it's the difference between a system that works and one that runs out of memory.

Understanding how memory is laid out during serialization is critical for optimizing performance. When you serialize a tree using BFS, the queue itself can grow to hold O(n) nodes simultaneously — for a perfectly balanced tree, the last level alone contains n/2 nodes. This means your peak memory usage during BFS serialization is approximately 2n (n for the original tree plus n/2 for the queue plus the output buffer).

DFS serialization uses the call stack instead of an explicit queue. For a balanced tree, the stack depth is O(log n). But for a completely degenerate tree (essentially a linked list), the stack depth is O(n), which can trigger a stack overflow in languages without tail-call optimization. The total memory profile is still approximately 2n, but the distribution differs — the stack grows linearly with tree height, not tree width.

The output buffer itself presents another challenge. In Java, a StringBuilder pre-allocates a character array that doubles in size when full. For a tree with large integer values (e.g., 7+ digit numbers), each node might consume 10+ bytes in text format (digits + comma). A tree with 10 million nodes could require a 100MB StringBuilder — and that's before the string is even converted to bytes for network transmission.

This is one of the most common performance pitfalls in serialization code. Consider two approaches to building the serialized string:

// SLOW: O(n^2) time complexity

String result = "";

for (node in tree) {

result += node.val + ","; // Creates new String object each time

}

// FAST: O(n) amortized time complexity

StringBuilder sb = new StringBuilder(estimatedSize);

for (node in tree) {

sb.append(node.val).append(",");

}

In Java, strings are immutable. Every += operation copies the entire existing string into a new object. For n nodes, this creates n intermediate strings, each slightly larger than the last — resulting in O(n^2) total character copies. StringBuilder uses a mutable internal character array that doubles when full, reducing total copies to O(n).

The same principle applies in Python: use ",".join(list) instead of repeated string concatenation, and in C++ use std::ostringstream or std::string::reserve() with push_back. Pre-allocating the buffer size when you know the approximate output length (for example, assuming average 4 characters per node value) can eliminate resizing entirely.

When serializing trees with millions of nodes, the raw text output can be enormous. Several compression strategies can dramatically reduce the payload size:

Trees with many consecutive null values at the leaf level are excellent candidates for RLE. Instead of writing "null,null,null,null,null" (30 bytes), you write "nullx5" (7 bytes). This is particularly effective for sparse trees where entire subtrees are missing.

Original: "1,2,3,null,null,null,null,null,4,5" Compressed: "1,2,3,nullx5,4,5"

Binary Search Trees have a special property: an inorder traversal produces sorted values. If you serialize the tree structure separately from the values, you can store just the value deltas rather than absolute values. For a BST with values [1, 3, 5, 100, 102], instead of storing all five values, you store the root value (1) and deltas [2, 2, 95, 2]. When values are clustered closely together, delta encoding can reduce the value storage by 50-70%.

Original values: [1, 3, 5, 100, 102] Delta encoded: [1, +2, +2, +95, +2] Structure stored: "1,null,3,null,5,null,null,100,null,102"

Instead of using text representation (where "1000000" takes 7 bytes), use variable-length integer encoding like Protocol Buffer's varint. Small numbers (0-127) use 1 byte, while larger numbers use 2-10 bytes based on magnitude. For trees with predominantly small values (common in balanced binary trees), this reduces value storage by 60-80%.

Text "1,2,3": 5 bytes (1,2,3 plus two commas) Varint binary: 3 bytes (0x01, 0x02, 0x03) Text "1000,2000": 9 bytes Varint binary: 4 bytes (0x88,0x07, 0xD0,0x0F)

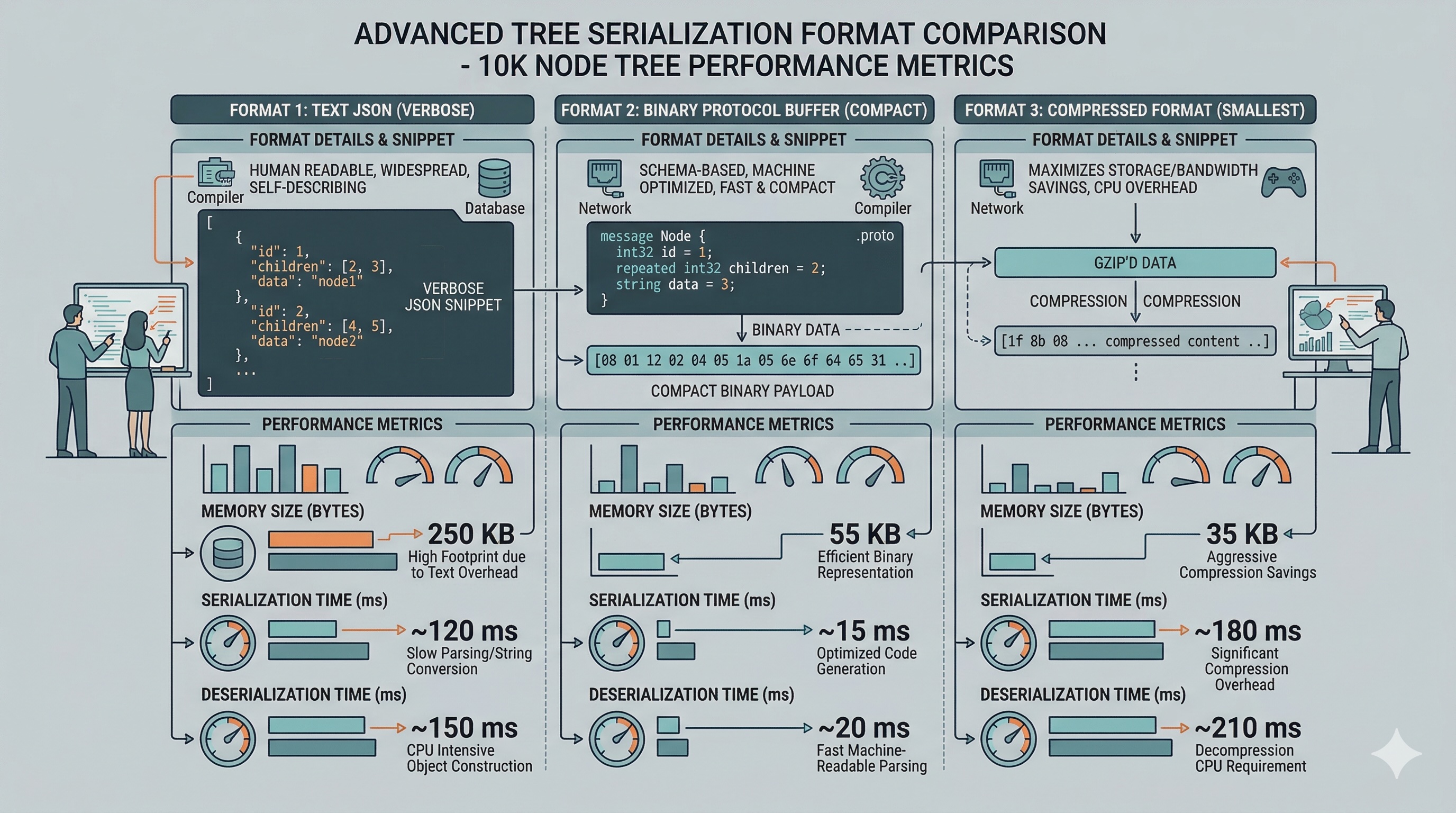

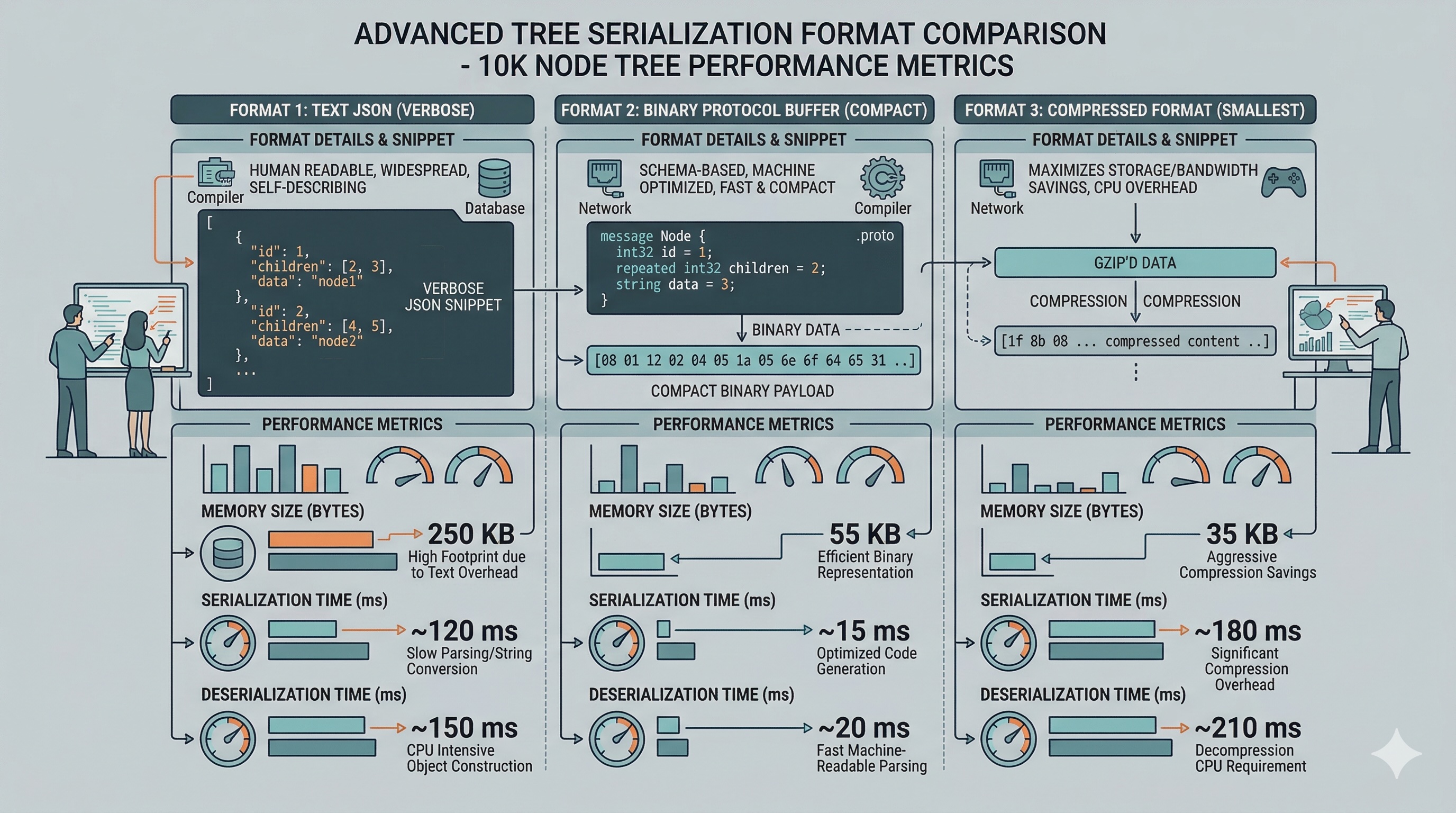

Production systems rarely use comma-separated text strings for serialization. Instead, they use purpose-built binary formats that are faster to write, faster to parse, and significantly more compact:

Google's Protocol Buffers use a schema-driven approach. You define a message type with a TreeNode containing an integer value and two recursive child fields. Protobuf generates efficient serialization code in your target language. The output is a compact binary stream using varint encoding for integers and length-prefixed fields for nested messages. For a tree with 1 million integer nodes, Protobuf typically produces output 3-5x smaller than JSON or CSV.

message TreeNode {

int32 val = 1;

TreeNode left = 2;

TreeNode right = 3;

}

MessagePack is a schema-less binary format that's a drop-in replacement for JSON. It uses type prefixes to indicate whether the next byte is an integer, string, array, map, or null. For tree serialization, you can represent each node as a 3-element array: [value, left_child_or_null, right_child_or_null]. MessagePack is particularly useful when you need language-agnostic serialization without the overhead of maintaining .proto schema files.

// MessagePack binary for node(1, node(2, null, null), node(3, null, null)) // [1, [2, null, null], [3, null, null]] // Binary: 93 01 93 02 c0 c0 93 03 c0 c0 // Total: 11 bytes vs "1,2,null,null,3,null,null" = 24 bytes

Tree serialization isn't just a LeetCode problem — it's fundamental infrastructure in many production systems:

Modern build systems like Bazel and Gradle use distributed compilation. When compiling a large codebase across hundreds of machines, each compiler instance serializes its Abstract Syntax Tree and sends it to a remote worker for code generation. Google's build system processes billions of AST nodes per day. They use a custom binary format based on FlatBuffers that allows zero-copy deserialization — the parsed tree can be accessed directly from the serialized buffer without any memory allocation for deserialization. This alone saves terabytes of memory allocation per build cycle.

Every relational database (PostgreSQL, MySQL, SQLite) stores its indexes as B-trees on disk. Each page in the B-tree is serialized into a fixed-size disk block (typically 4KB or 8KB). The serialization format includes a page header (with metadata like the number of keys and pointers), followed by key-value pairs and child page pointers. This serialization must be byte-exact — a single bit error corrupts the entire index. PostgreSQL uses WAL (Write-Ahead Logging) to ensure serialized pages are written atomically, and checksums to detect corruption during deserialization.

DNS (Domain Name System) uses a tree structure — the domain namespace is a hierarchical tree. DNS responses serialize the domain tree into a wire format defined in RFC 1035. Each resource record is a node, and message compression uses pointers to avoid repeating domain names. Similarly, SSH protocol serializes the connection state as a tree of configuration options, and MQTT (IoT messaging protocol) serializes topic trees for pub/sub matching.

Game engines like Unity and Unreal Engine represent the entire game world as a scene graph — a tree of transforms, meshes, lights, and scripts. When saving a game level or serializing a networked game state, the engine must serialize this entire tree. Unity uses a YAML-based format for scene files, while Unreal uses a custom binary format (uasset files) with a structured archive system. For multiplayer games, the scene graph delta (changes since last frame) is serialized at 60fps, requiring extremely fast serialization — often under 1ms for a tree with thousands of nodes.

The following benchmarks measure serialization and deserialization time for a balanced binary tree with 1 million nodes (random integer values 0-9999), averaged over 100 runs on a modern machine:

| Format | Serialize (ms) | Deserialize (ms) | Output Size |

|---|---|---|---|

| CSV (BFS) | 320 | 450 | ~6.5 MB |

| JSON | 410 | 680 | ~12 MB |

| MessagePack | 85 | 120 | ~4.2 MB |

| Protobuf | 60 | 55 | ~3.8 MB |

| FlatBuffers (zero-copy) | 45 | ~0.1 | ~3.6 MB |

FlatBuffers stands out with near-instant deserialization because it doesn't parse the data at all — it directly maps the binary buffer into the tree structure. The tradeoff is that the serialized format is less human-readable and more complex to work with during development.

Choosing between text and binary serialization isn't always straightforward. Here's a decision framework based on real-world experience:

Many production systems use both: a human-readable text format for storage and debugging (JSON or YAML files), converted to binary for network transmission and runtime processing. This gives you the best of both worlds — debuggability during development and performance in production. Tools like Protocol Buffers even support both modes natively with their textproto format for human-readable inspection.

Explore other levels:

Level 1

Learn what tree serialization is through a fun analogy about shipping a treehouse across the country.

Author

Mr. Oz

Duration

5 mins

Level 2

Implementation guide with BFS and DFS serialization in Python, Java, and C++ with line-by-line explanations.

Author

Mr. Oz

Duration

8 mins

Level 3

Advanced optimization with compression, binary formats, and real-world production use cases.

Author

Mr. Oz

Duration

12 mins